Microsoft Cognitive Service – AI to BI

Artificial Intelligence (AI) is a way of making nonliving objects to think and react like a human and Machine Learning (ML) is a part of AI and Microsoft Cognitive Services is build using ML.

What is Microsoft Cognitive Services?

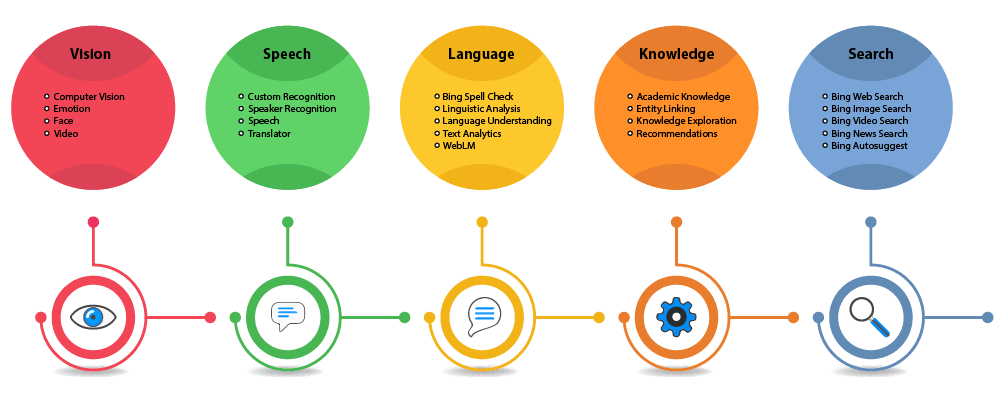

Microsoft Cognitive Services are a set of APIs, SDKs and services available to developers to make their applications more intelligent, engaging and discoverable. It helps developers to create the next generation of applications with the ability to understand, interpret, see, hear and speak using natural methods of communication, Microsoft release all this cognitive service at Build 2017 and within a very few time duration it’s became a very popular due to reliable, fast and accurate outputs. MCS provides several types of API which can directly integrate into your app, across devices and platforms such as Android, iOS and Windows. Its intelligent features are emotion detection, facial, speech and vision recognition and image analysis etc. This all APIs found under the “AI + Cognitive Service” section at Microsoft azure portal. There are very large numbers of API provided by MCS but here I want to give you very interesting and useful API’s information.

Vision API provides algorithms which helps to moderate content and build personalized application by analyzing and images, recognize emotions, face verification or detect faces in videos and many more. Face verification API check the likelihood that two faces belong to the same person or not. It’s also provides the confidence score that how likely both faces are similar. While Face detection API detect human faces in an image and also provide some information about that person’s age, emotion, gender, pose, smile and facial hair. Optical character recognition (OCR) support is also there which detects text in an image and extract the words.

Speech API provides spoken language in your application by speech-recognition, translate real-life conversations, text to speech and custom speech service by which customize the language model of the speech recognizer by customizing it to the vocabulary of the application and the speaking style of your users.

Language API allows our application to process natural language by which app can evaluate the sentiments and topics and also learn how to recognize what user wants. Language Understanding intelligent service,(LUIS) provides a simple tools which enable you to build your very own language model which allow any application to understand your commands and then act accordingly. It also provides sentiment analysis by which app can identify the positive and negative sentiments.

Knowledge API collects and maps complex data in order to solve any particular problem such as recommendations and semantic search. Recommendation API learns from previous transaction to predict which items/products are more likely purchased together and also learn from previous transaction to predict which items are most likely to be purchased together. The QnA maker also a good feature which extracts all possible pairs of question and answers from users provided content like FAQ URLs, documents etc.,

Search API helps to integrate Bing search’s result into your application like Bing autosuggest, Bing Image Search, Bing News Search, Bing Video Search, Bing Web Search, Bing Custom Search and Bing Entity Search.